Mobile World Congress (MWC) 2026 in Barcelona is once again the global stage for the telecommunications industry. The event brings together over 100,000 attendees. Network operators, vendors, and enterprise leaders meet to discuss what comes next.

This year, a few dominant themes stand out: AI and agentic automation, network APIs, sovereign cloud architectures, autonomous networks, and 5G monetization. Each of these trends shares a common requirement. They all depend on the ability to move, process, and act on data continuously, in real time, and at massive scale.

That is exactly what a Data Streaming Platform built on Apache Kafka and Apache Flink delivers. The following explores each trend and how data streaming creates tangible business value for telecommunications companies.

The following sections explore each of these five MWC trends, explain the concrete data streaming use cases behind them, and show why applied AI only works when it is built on a real time data foundation.

Join the data streaming community and stay informed about new blog posts by subscribing to my newsletter and follow me on LinkedIn or X (former Twitter) to stay in touch.

And download my free eBook. “The Ultimate Data Streaming Guide – Telecom Edition” to learn about telco and media use cases.

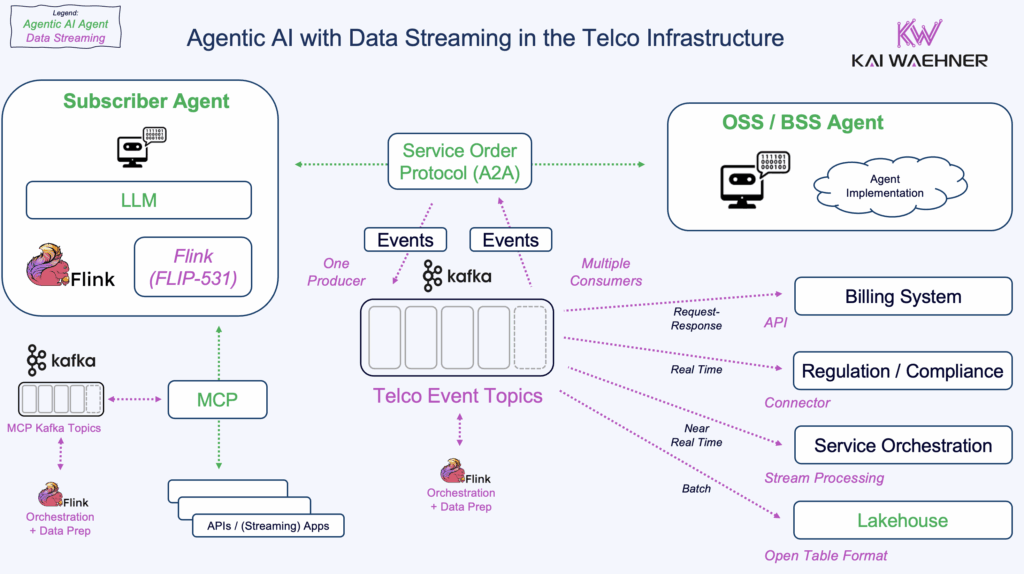

AI and Agentic AI in Telecom Networks

AI dominates the MWC 2026 agenda. The very first conference session on the first day is “The Agentic AI Summit”. Operators like LG Uplus and SK Telecom are showcasing AI orchestration platforms and personal AI assistants. Deutsche Telekom and KPN both want to see real, scaled AI deployments that go beyond proof of concept.

The message from telco executives is clear: Fewer conceptual narratives, more measurable impact.

Here is the challenge: AI models and agents are only as good as the data they consume. A large language model trained on last month’s network data will make decisions based on outdated information. An agentic AI system that reacts to a network anomaly from 30 minutes ago is too slow. By the time it responds, the problem has already cascaded.

Apache Kafka and Flink as the Real Time Data Foundation for Telco AI

Data streaming with Kafka and Flink solves this problem at its root. Kafka continuously collects and distributes data from every part of the network: RAN, core, edge, BSS, OSS, and customer-facing applications. Flink processes that data as it flows, applying filters, transformations, aggregations, and pattern detection in milliseconds.

This combination gives AI models and agents access to fresh, contextual, high quality data. A streaming agent built on top of this infrastructure can detect a service degradation, correlate it with customer impact data, and trigger a remediation action before a human operator even notices the alert. That is the difference between AI as a slideshow demo and AI that saves real money.

Telco executives are under growing pressure to adopt AI across the network. At the same time, they have been pitched too many concepts that never made it past a proof of concept. The patience for vaporware is running out. If there is no clear ROI, there is no budget. Real time data streaming provides the missing layer that turns AI promises into measurable outcomes.

Real time data is not optional for AI. It is the foundation.

Network APIs and Developer Ecosystems

Network APIs are gaining serious momentum. A dozen major operators and Ericsson have invested equity in Aduna, the network API joint venture. Aduna’s goal is to aggregate and commercialize standardized network APIs on a global scale, making network capabilities as accessible to developers as cloud services are today.

Nokia and Telefónica just announced a collaboration around agentic AI combined with APIs. Their goal is to expose network capabilities to external developers and enterprise customers, including quality of service, location, and device status.

The business opportunity is significant. But exposing APIs means handling several operational challenges at once, including:

- Unpredictable traffic patterns from external developers

- SLA enforcement across diverse API consumers

- Real time rate limiting and abuse detection

- Accurate, usage based billing

Data Streaming as the Backbone for API Monetization

Kafka serves as the backbone for API event processing. Every API call, every response, and every error can be published as an event to a Kafka topic. Flink then processes these streams to calculate usage metrics, detect abuse patterns, enforce throttling policies, and feed billing systems with accurate consumption data.

Without an event-based architecture and streaming, API platforms rely on batch processing for analytics and billing. That means delayed insights, inaccurate invoices, and slow responses to misuse. With data streaming, the entire API lifecycle becomes observable and controllable in real time.

For telecom operators trying to monetize their network through APIs, this is not a nice to have. It is the operational backbone that makes the business model work.

Sovereign Cloud and Data Residency

Sovereignty is a major theme at MWC 2026, with several conference sessions focusing on building trusted ecosystems. Operators like KPN emphasize strict data residency and security requirements. The geopolitical landscape is driving telcos to keep data local and maintain control over where and how it is processed.

Regulations like GDPR and the EU AI Act raise the stakes even further. Sending customer data to US based public cloud AI services creates compliance risks that many European telcos are no longer willing to accept. Sovereign infrastructure is not just a preference. It is becoming a legal requirement.

Apache Kafka for Sovereign Data Flow and Governance

Data streaming plays a critical role in sovereign architectures. Kafka can be deployed across multiple regions and environments: on premises, in private clouds, in sovereign clouds, and at the edge. Data replication between clusters can be configured with fine-grained control over what data moves where.

Flink adds the processing layer. Sensitive data can be filtered, masked, or transformed before it leaves a sovereign boundary. Governance policies embedded in the streaming platform ensure that personally identifiable information never crosses a border it should not cross.

This matters because the sovereign cloud is not just about where the compute runs. It is about controlling the flow of data across the entire infrastructure. A Data Streaming Platform provides that control natively, with built in governance, lineage tracking, and security.

Sovereignty starts with the data layer. If the data pipeline is not sovereign, the cloud is not sovereign either.

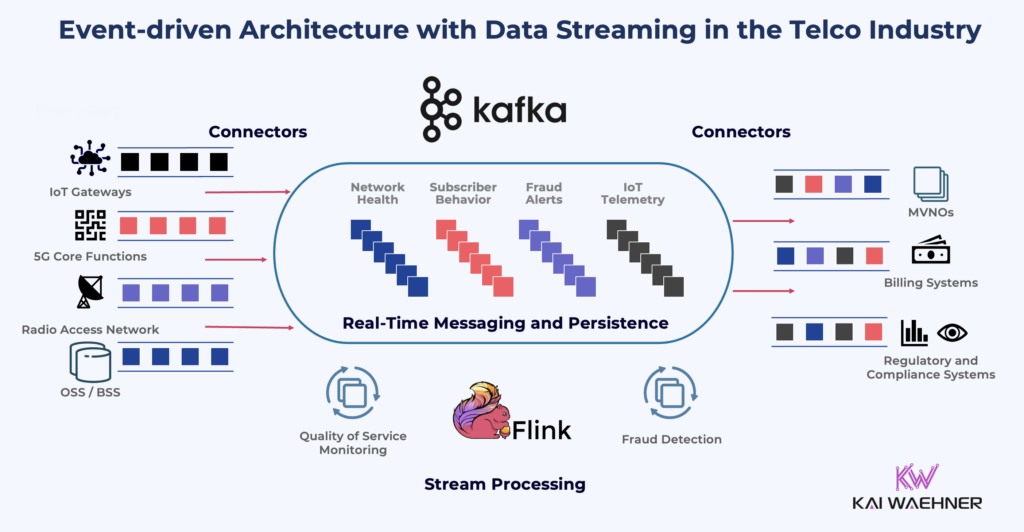

Autonomous Network Operations

Multiple telco executives at MWC 2026 are asking the same question: How far have autonomous networks actually come? KPN wants to see systems that do not just flag a problem but actually fix it. Orange is tracking how AI can bring more than just efficiency and potentially drive new revenue streams.

Autonomous networks require a closed loop: sense, decide, act. That loop must run continuously, not in batch cycles.

Stream Processing with Apache Flink for Closed Loop Network Automation

Kafka ingests telemetry and event data from every network element. Cell towers, switches, routers, edge nodes, and customer devices all generate streams of operational data. Flink processes these streams to detect anomalies, predict failures, and trigger automated responses.

Consider a concrete example:

- A cell tower starts dropping packets at an unusual rate

- Flink detects the anomaly within seconds by comparing current behavior against historical baselines

- It correlates the event with weather data and traffic patterns from neighboring towers

- The system automatically reroutes traffic and opens a maintenance ticket

- No human intervention needed

This is not theoretical. These are the types of use cases that operators want to see proven and in production. Autonomous networks cannot run on stale data. The closed loop only works if the data layer is streaming.

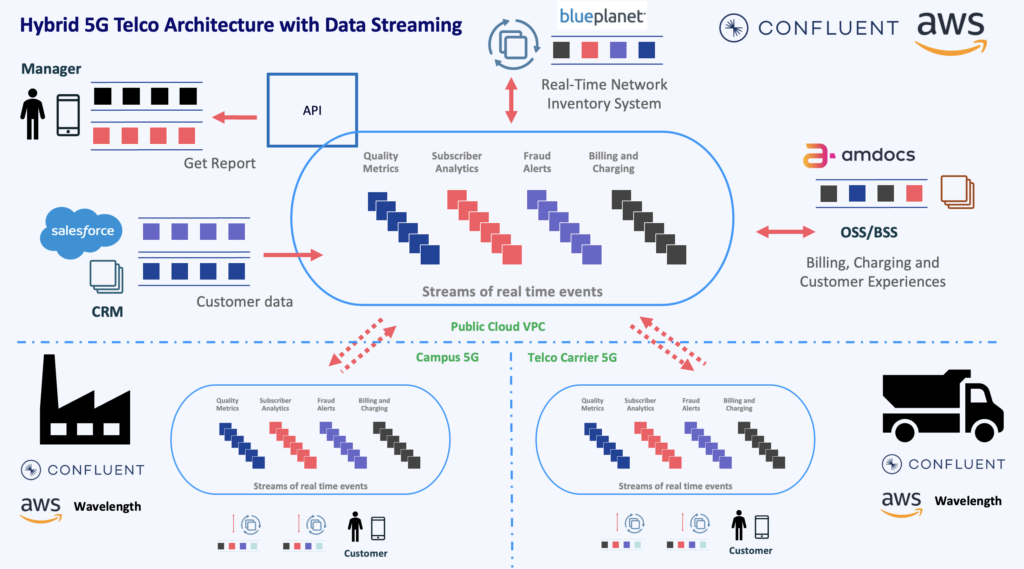

5G Monetization and Enterprise Services

One of the most direct questions at MWC 2026 comes from a conference session title: “5G’s Inconvenient Truth: How Do We Make Money?” VodafoneThree wants real world examples of businesses using 5G to drive digital transformation. AT&T Business is looking at new business models in non-traditional areas like hosting, finance, and gaming.

5G monetization depends on differentiated services. Network slicing, edge computing, and quality of service guarantees all promise premium revenue. But delivering these services requires continuous visibility into:

- Network performance per slice and per customer

- SLA compliance metrics like latency, throughput, and availability

- Resource consumption for usage based billing

- Customer experience indicators in real time

Real Time Data Streaming for 5G SLA Management and Billing

Kafka and Flink provide that visibility. Network slice telemetry flows through Kafka topics. Flink continuously monitors SLA metrics per slice and per customer. If a slice starts degrading, the system can adjust resources or notify the customer before they even notice.

On the billing side, data streaming enables usage-based pricing models with real time metering. Enterprise customers consuming network slices or edge compute resources get accurate, timely invoices. Operators get faster revenue recognition and fewer disputes.

The broader point is that 5G monetization is not a connectivity play; it’s a data play. The operator that can measure, optimize, and bill for differentiated services in real time wins the enterprise customer.

When 5G was first introduced, the industry expected a wave of new B2B revenue from network slicing, edge computing, and enterprise connectivity services. Six or seven years later, much of that revenue has not materialized. The technology was ready, but the operational backbone to deliver, measure, and bill for differentiated 5G services was not. Network APIs and programmable 5G capabilities offer another shot at capturing that enterprise opportunity. But this time, operators cannot afford to repeat the same mistake. Real time data streaming ensures that every 5G service can be monitored, optimized, and monetized from day one. Without it, 5G remains a faster pipe instead of a revenue platform

The Role of Applied AI Across All Trends

Every telecom trend discussed above intersects with AI:

- Agentic AI for network operations

- AI driven management of network interfaces

- Sovereign data classification powered by AI

- Autonomous remediation through intelligent automation

- Predictive SLA management using machine learning

But AI without real time data is like a pilot without instruments. Decisions get made, but they are based on guesswork.

Applied AI in telecom requires three things: fresh data, contextual enrichment, and connectivity to operational systems. A Data Streaming Platform provides all three. Kafka connects to hundreds of data sources and sinks across the telecom stack. Flink enriches and transforms data in flight. Together, they feed AI models and agents with the information they need to make accurate, timely decisions.

Streaming agents built on this foundation do not just analyze. They act. They connect insights to outcomes. That is the difference between AI experimentation and AI in production.

The Telecom Industry Is Moving from Batch to Data Streaming

Each of the five trends above represents a concrete business case for data streaming. Whether the conversation is about AI, APIs, sovereignty, autonomy, or 5G monetization, the underlying requirement is the same: continuous, governed, real time data flow.

MWC 2026 makes this clearer than ever. The operators and vendors that build their AI, their APIs, and their autonomous operations on a streaming foundation will lead. The rest will be catching up.

Join the data streaming community and stay informed about new blog posts by subscribing to my newsletter and follow me on LinkedIn or X (former Twitter) to stay in touch.

And download my free eBook. “The Ultimate Data Streaming Guide – Telecom Edition” to learn about telco and media use cases.